Kubernetes Orchestration in 2026: Container Management, Scaling, and DevOps Efficiency

As organizations scale microservices and AI-powered workloads across multi-cloud environments, managing containers becomes a major challenge. Kubernetes orchestration solves this by automating deployment, scaling, and operations. Many of these modern architectures are built through cloud application development services that prioritize scalability, resilience, and portability from the outset.

Recent industry data shows that over 82% of organizations run Kubernetes in production, with nearly two-thirds using it for AI and machine learning workloads. This shift shows how Kubernetes is progressing beyond basic container management to support high-performance, data-intensive applications at scale.

In 2026, enterprises are prioritizing automation, cost efficiency, and faster release cycles, making Kubernetes orchestration a core part of their cloud-native strategy. It not only ensures application reliability but also helps teams manage infrastructure dynamically without increasing operational overhead.

For businesses building modern digital platforms, Kubernetes orchestration has become a core capability for consistent performance, faster innovation, and efficient scaling.

This blog covers its fundamentals, key components, benefits, use cases, and best practices for managing containers at scale.

Kubernetes orchestration refers to the automated management of containerized applications across a cluster of machines. Its primary purpose is to ensure that applications run reliably, scale efficiently, and remain available without manual intervention.

Instead of managing individual containers, Kubernetes performs key functions such as scheduling workloads, maintaining the desired number of instances, and automatically recovering from failures.

In practical terms, Kubernetes orchestration enables:

Kubernetes uses declarative configurations and APIs to define how applications should run. It then continuously monitors and adjusts the system to match the desired state.

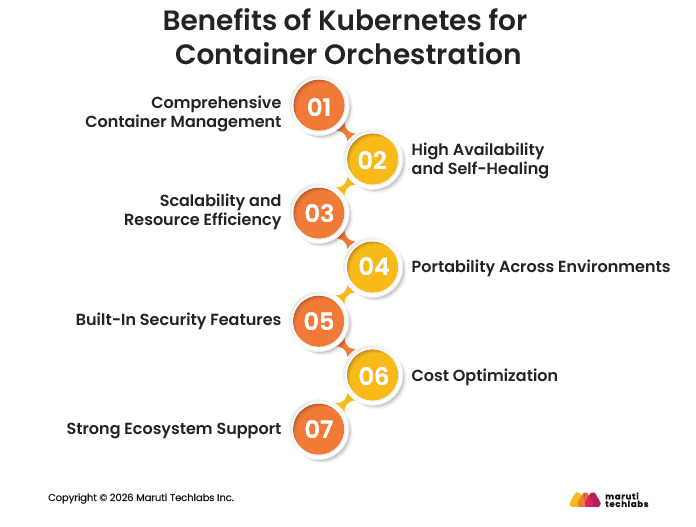

Kubernetes provides numerous benefits for container orchestration by automating critical operational tasks and offering a robust framework for managing containerized applications at scale.

Kubernetes automates container lifecycle tasks such as deployment, monitoring, and recovery, reducing manual effort.

Kubernetes ensures high availability by automatically redistributing workloads when failures occur, minimizing downtime.

Applications can scale up or down based on demand, ensuring optimal resource utilization and consistent performance.

Kubernetes works across cloud providers and on-premise environments, enabling seamless workload portability.

It offers role-based access control, network policies, and secrets management to secure applications and data.

By efficiently allocating resources, Kubernetes helps reduce infrastructure costs and prevent resource wastage.

A large open-source community and ecosystem provide extensive tools, integrations, and support.

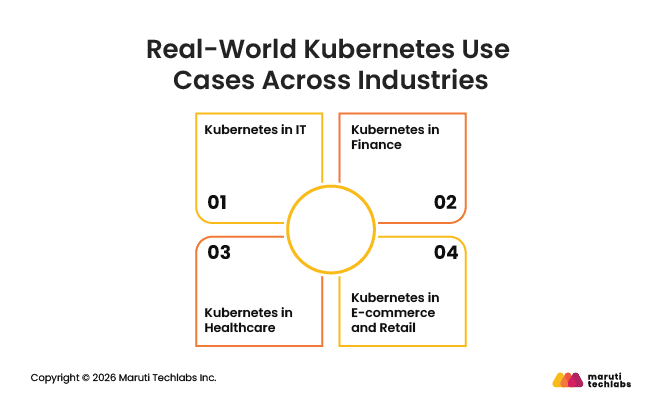

Real-world Kubernetes use cases span industries, helping organizations scale applications, improve reliability, and efficiently manage modern cloud-native workloads. Key applications and examples include:

Kubernetes is widely used in IT to manage complex, resource-intensive workloads that require high scalability, reliability, and efficient resource utilization. It enables teams to streamline application deployment, optimize computing resources, and run large-scale data processing and AI workloads across cloud and on-premise environments.

OpenAI uses Kubernetes to manage large-scale AI workloads across cloud and on-premise environments. With Kubernetes, it achieves:

Kubernetes is widely used in the finance industry to run mission-critical applications that require real-time processing, high availability, and strict compliance.

Banking Circle uses Kubernetes to run a highly scalable, cloud-native payments platform capable of processing over 300 million B2B transactions annually.

By leveraging Kubernetes for container orchestration and autoscaling, Banking Circle achieved:

Healthcare organizations are using Kubernetes to power real-time diagnostics and AI-driven clinical applications that require low latency, scalability, and secure data handling.

Apollo Hospitals uses Kubernetes-based platforms to enable AI-assisted colonoscopy procedures. These systems process live video streams and run real-time detection models during procedures. With Kubernetes, they achieve:

Kubernetes helps e-commerce and retail companies manage fluctuating traffic, ensure application performance, and accelerate deployment cycles. Its autoscaling capabilities allow businesses to handle peak demand without compromising user experience.

Adidas adopted Kubernetes to modernize its digital infrastructure and improve developer productivity, achieving:

Enterprises use Kubernetes to automate, scale, and manage containerized applications, enabling high availability, faster deployments, and efficient resource utilization across complex environments.

Companies like Spotify use Kubernetes to scale infrastructure dynamically and support millions of users during peak demand.

Netflix leverages Kubernetes to manage microservices, enabling faster deployments and high availability.

Adidas uses Kubernetes across multiple cloud environments to improve deployment speed and optimize performance.

Kubernetes and DevOps are interconnected, as both focus on enhancing software development and delivery processes. DevOps implementation combines software development (Dev) and IT operations (Ops) to enhance collaboration and optimize the development lifecycle. It facilitates the efficient management of containerized applications.

Kubernetes plays a vital role in this process by providing container orchestration, which helps manage containerized applications efficiently.

Kubernetes supports agile workflows by allowing teams to work on different parts of an application simultaneously. Developers can implement changes and roll out new features quickly. This flexibility helps teams respond to user feedback faster, leading to better software.

Kubernetes works well with Continuous Integration and Continuous Deployment (CI/CD) pipelines that automate testing and deploying code changes.

Integrating container orchestration tools with CI/CD ensures applications are up to date and running smoothly. Mastering CI/CD in this process helps teams save time and reduce errors during deployment.

Using Kubernetes for container automation enables development teams to significantly speed up their workflows. They can quickly deploy, scale, and manage applications without manual intervention.

This efficiency helps companies release new features faster, keeping them competitive in the market. Overall, Kubernetes enhances DevOps practices by making development cycles quicker and more reliable.

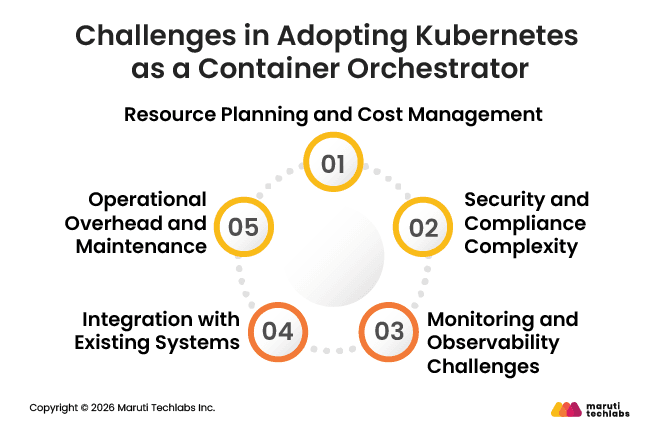

Adopting Kubernetes can be challenging, with teams needing to manage resource usage and costs, secure complex environments, maintain visibility across dynamic workloads, integrate with existing systems, and handle ongoing operations.

Without proper planning and the right tools, these challenges can impact performance, increase overhead, and slow down adoption.

While Kubernetes optimizes resource usage, improper planning can lead to over-provisioning or under-utilization, increasing infrastructure costs or impacting application performance.

Continuous monitoring, autoscaling strategies, and resource allocation policies are essential to ensure cost efficiency and system stability.

Kubernetes environments expand the attack surface by introducing multiple layers, such as containers, APIs, and network configurations that need to be secured.

Misconfigured access controls, exposed endpoints, or vulnerable container images can pose risks, making it important to follow strong security practices such as RBAC, network policies, and regular audits, as well as to invest in cloud security services.

The dynamic nature of Kubernetes, where containers are constantly created and terminated, makes monitoring more complex than traditional systems.

Without proper observability tools, it becomes difficult to track performance issues, diagnose failures, or maintain system reliability across distributed environments.

Integrating Kubernetes with legacy applications and existing enterprise systems can be challenging, especially when those systems are not designed for containerized environments.

Organizations often need to refactor applications or adopt phased migration strategies to ensure smooth integration without disrupting operations.

Managing Kubernetes in production requires ongoing efforts such as upgrades, patching, scaling, and performance tuning.

Without automation and proper governance, this can increase operational overhead, making it essential to leverage managed services and orchestration tools to maintain efficiency.

Kubernetes has become a foundational technology for container orchestration, enabling organizations to manage complex, distributed applications with greater efficiency and control. As digital ecosystems grow more complex, Kubernetes provides the flexibility and resilience required to support evolving application demands.

However, successfully implementing Kubernetes goes beyond deployment. It requires the right architecture, seamless integration with existing systems, and continuous optimization to ensure performance, cost efficiency, and security.

To fully unlock the value of Kubernetes, businesses must align their container orchestration strategy with broader cloud and DevOps goals.

Maruti Techlabs enables enterprises to successfully adopt and scale Kubernetes by combining deep DevOps expertise with cloud-native engineering capabilities.

The team specializes in containerization, CI/CD pipeline integration, and infrastructure automation, helping businesses build resilient, high-performing applications while reducing operational complexity and time-to-market.

We have helped a leading automotive platform migrate over 200 microservices from a monolithic system to a Kubernetes-based architecture, achieving:

To build and optimize your Kubernetes environment, explore our DevOps Consulting Services for streamlined automation and deployment.

Kubernetes, as a container orchestrator tool, manages the deployment, scaling, and operation of containers. Docker, on the other hand, is a tool for creating and running containers. It focuses on building individual containers, whereas Kubernetes manages clusters of containers.

Kubernetes provides several security features, including role-based access control (RBAC), network policies, and secrets management. These features help protect applications by controlling access to resources and ensuring that sensitive information is securely stored and transmitted.

Yes, Kubernetes can run on local machines using tools like Minikube or Docker Desktop. These tools allow developers to create a local Kubernetes cluster for testing and development purposes before deploying applications to production environments.

Helm is a package manager for Kubernetes. It simplifies the deployment and management of apps. Helm allows users to define, install, and upgrade applications using reusable templates called charts, making it easier to manage complex deployments.

Monitoring applications in Kubernetes can be done using tools like Prometheus and Grafana. These tools help track performance metrics, visualize data, and alert teams about issues, ensuring that applications run smoothly and efficiently in production environments.